A picture paints a thousand words

A project run by the IIASA Ecosystem Services and Management Program (ESM) demonstrates how satellite imagery linked with a micro-tasking application such as Picture Pile can support humanitarian efforts. By asking users to answer simple yes-or-no questions, the application employs the help of citizens to detect damaged buildings and other structures over a large area affected by disaster. The idea is to help provide a quick initial damage assessment, so that help can quickly reach those who need it. The workflow established during this project sets the foundation and protocol for future post-disaster damage assessment projects.

According to the World Bank’s global risk analysis, around 34% of the world’s population live in areas of high mortality risk from two or more natural hazards. This means that timely and innovative methods to rapidly assess damage and subsequently aid relief and recovery efforts are critical. In recent years, several crowdsourcing-based technological tools that engage citizens in carrying out various tasks including data collection, satellite image analysis, and online interactive mapping, were developed to aid efforts in the field of post-disaster damage assessment. One such tool is Picture Pile, a cross-platform application that is designed as a generic and flexible tool for ingesting satellite imagery for rapid classification.

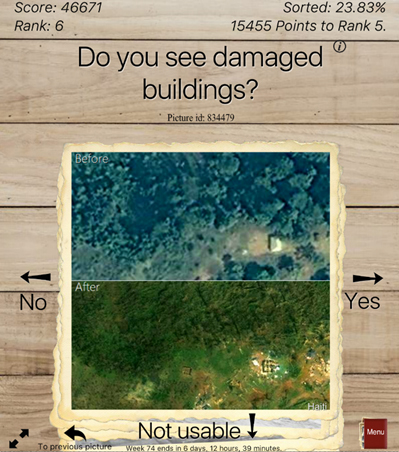

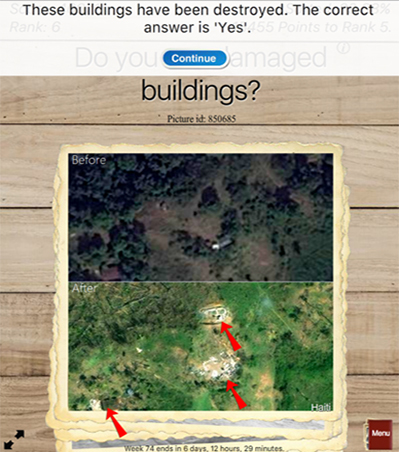

As part of the European Space Agency’s Crowd4Sat initiative led by Imperative Space, the team developed a workflow employing Picture Pile for rapid post-disaster damage assessment. In a paper prepared for the European Geophysical Union General Assembly [1], the researchers outline how satellite image interpretation tasks within Picture Pile can be crowdsourced using the example of Hurricane Matthew, which affected large regions of Haiti in September 2016. The application provides simple micro-tasks, where the user is presented with satellite images and asked simple yes-or-no questions. A “before” disaster satellite image is displayed next to an “after” disaster image, and the user is asked to assess whether there is any detectable damage. The question is formulated precisely to focus the user’s attention on a particular aspect of the damage. The user-interface of Picture Pile is built for users to rapidly classify the images by swiping to indicate their answer, thereby efficiently completing the micro-task.

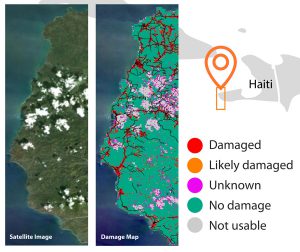

Results of post-disaster damage assessment: satellite image from DigitalGlobe imagery (WorldView 3) of the affected area is available for 10 October 2016 on the left and the result of post-disaster damage assessment using Picture Pile on the right.

This approach will not only help to increase citizen awareness of natural disasters, but will also provide them with a unique opportunity to contribute directly to relief efforts. To ensure confidence in the crowdsourced results, quality assurance methods were integrated during the testing phase of the application using image classifications from experts. The application has a built-in real-time quality assurance system to provide volunteers with feedback when their answer does not correspond with that of an expert.

Picture Pile is intended to supplement existing approaches to post-disaster damage assessment and can be used by different networks of volunteers, for example, the Humanitarian OpenStreetMap Team, to assess damage and create up-to-date maps of response to disaster events. The application has the potential to be integrated into and enhancing existing services that provide rapid post-disaster damage assessments such as the EU’s Copernicus Emergency Management Service (Copernicus EMS).

References

[1] Danylo O, Sturn T, Giovando C, Moorthy I, Fritz S, See L, Kapur R, Girardot B, Ajmar A, Tonolo FG, Reinicke T, Mathieu PP, Duerauer M (2017) Picture Pile: tool for rapid post-disaster damage assessments. European Geophysical Union General Assembly 2017 EGU2017-19266

Collaborators

- Imperative Space, UK

- Humanitarian OpenStreetMap Team (HOT), USA

- European Space Agency (ESA)

Further information

Other highlights

Top image © Frans Delian | Shutterstock

You must be logged in to post a comment.